What an Agent Actually Is

Most discussions of agentic systems focus on the reasoning engine, the LLM at the center. That’s the cognitive core. It’s also one component of six. And those six components exist inside a system that has its own set of components entirely. Until you can name all of them, you can’t design complete governance for any of them.

Architecture starts with vocabulary. Not patterns, not frameworks. The atomic nouns. What are the things you’re working with? In traditional distributed systems, architects have a settled vocabulary: services, interfaces, data stores, queues, trust boundaries. The vocabulary is stable enough that two architects from different organizations can review the same system and be talking about the same things.

Agentic systems don’t have that yet. Everyone has their own mental model, and most of them are incomplete. AFAS starts with a primitives inventory, a two-level model that defines what an agent IS and what a multi-agent system IS. The rest of the framework operates on this vocabulary.

Level 1: The Agent

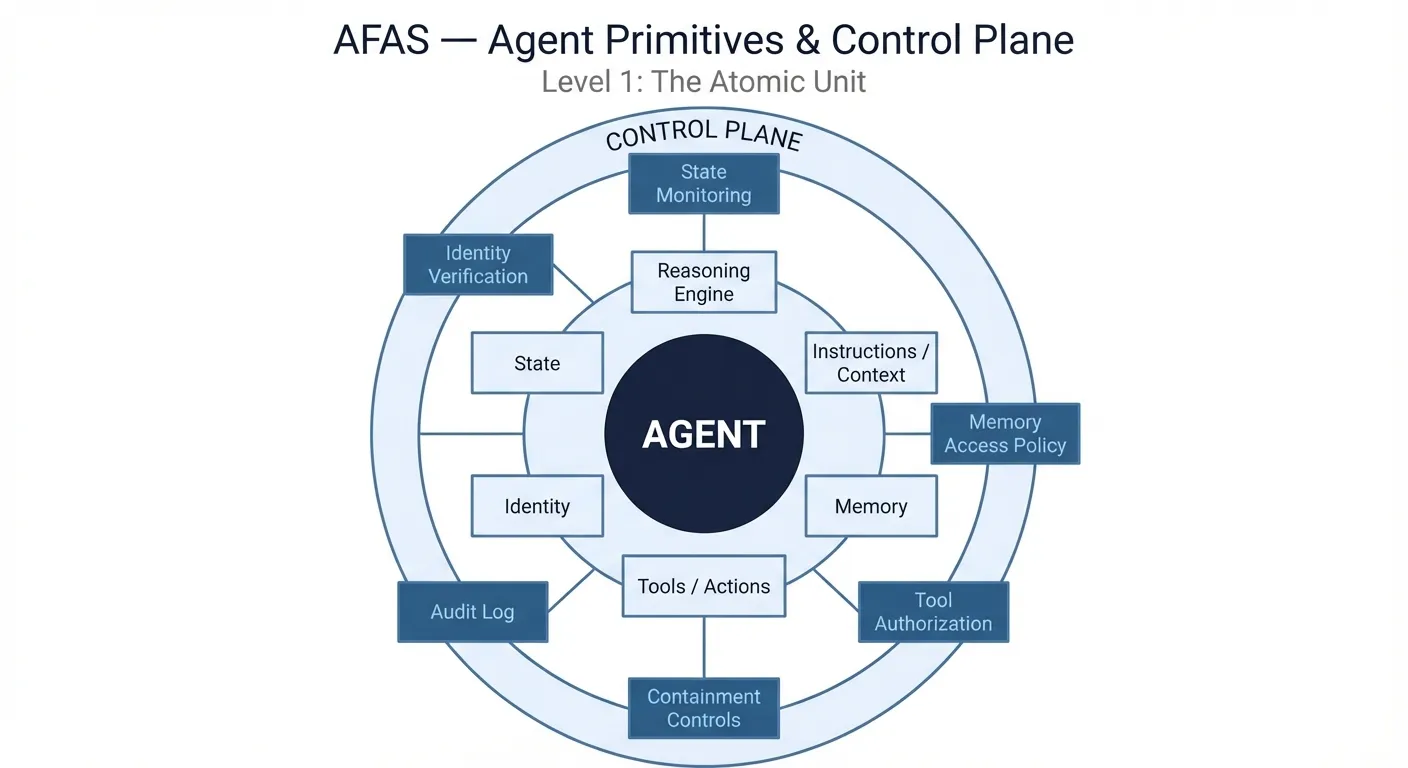

An agent has six primitives, the intrinsic components that make it an agent. These exist whether you’ve designed them deliberately or not.

Notice what’s not in this list: policy, permissions, guardrails. Those are control plane concerns, not agent primitives. That’s a deliberate design decision. The capability gap section explains why it matters.

The control plane is the governance infrastructure that operates outside any individual agent: enforcement points, authorization policies, monitoring, and audit mechanisms that determine what agents are permitted to do. It’s not prompt text, not model weights, not agent code. It’s the structural layer that governs how each primitive is allowed to operate. Think of it as the difference between what the agent is and what the agent is allowed to do in a given context.

Reasoning engine. The LLM or decision model. The cognitive core. What thinks. In a multi-agent system, there are many of these (one per agent, not a single system brain). Most architects fixate on this component. It’s important. It’s also just one of six.

Instructions and context. The system prompt plus the context window, meaning what the agent knows right now. Two distinct parts: the system prompt is relatively static (standing instructions, role definition, rules of engagement, a deployment concern); the context window is dynamic (retrieved documents, conversation history, tool outputs, an operational concern). Both condition behavior at invocation time, but they’re governed differently. The context window is not memory. Conflating the two is one of the more common design errors in early agentic systems, and it compounds quickly. A context window treated as memory produces bloated token costs, latency spikes, and context-window amnesia: the agent simply doesn’t know what happened before the current invocation. Context is an ephemeral, bounded injection at runtime; memory requires persistent state management, lifecycle policies, and retrieval mechanisms. They fail differently, so they’re governed differently.

Memory. Persistent knowledge across invocations. Memory has three forms: episodic (what happened), semantic (what the agent knows), and procedural (how to do things). Memory persists where the context window resets. The access policy for memory (what the agent can read, write, and share) is a governance concern, not an agent-level primitive. That distinction matters.

Tools and actions. The functions the agent can invoke: APIs, write operations, spawning sub-agents, external integrations. This is the agent’s capability boundary, what it CAN do. Not the same as what it’s ALLOWED to do. That distinction is critical and worth slowing down on.

Identity. Name, role, declared purpose, claimed capabilities. What the agent IS, or at least what it claims to be. The primitive is claimed identity. Verified identity (authenticated, attested, authorized) is a control plane concern. An agent can claim any identity. Whether that claim is validated is a structural design decision.

State. Current operational status: active, waiting, executing, idle, error, suspended. Every agent has state whether it’s tracked or not. Untracked state is a governance gap, not an absent primitive.

The Capability Gap

The tools/identity distinction is worth dwelling on because it’s where architectural decisions get made that most teams don’t realize they’re making.

When you define an agent’s tool list, you’re defining its capability boundary, the universe of what it could do if invoked in any context. When you define tool authorization at the control plane, you’re defining what it’s permitted to do in a specific context, under specific conditions, with specific constraints.

Capability without authorization policy is a loaded weapon with no safety. Authorization policy without architectural enforcement is a hope, not a guarantee. Architectural enforcement means the tool execution request is intercepted by a middleware layer or gateway that validates the agent’s authorization token and context before the API is ever reached, not after. That enforcement point is a distinct address in the architecture from the system prompt.

Most teams today define capability. Fewer design authorization policy. Almost none enforce it architecturally, particularly in early-stage deployments where behavioral guardrails feel sufficient. The behavioral vs. structural safety gap, playing out at the primitive level. You can put the policy in the system prompt, or you can put it in the system.

Consider an agent with a process_payment tool. Capability means the function exists in its registry. Authorization means the control plane has verified: is this agent permitted to call this tool in this context? For what amount? Under what delegation authority? With what audit requirement? Those aren’t prompt concerns. They’re architectural ones.

Tools are the obvious example. The same gap exists for memory (which agents can read which stores, under what conditions, with what retention constraints). For delegation (which agents can pass authority to which others, how far down the chain, with what scope limits). For communication (which agents can message which endpoints). If it’s reachable but not structurally constrained, it’s a capability leak.

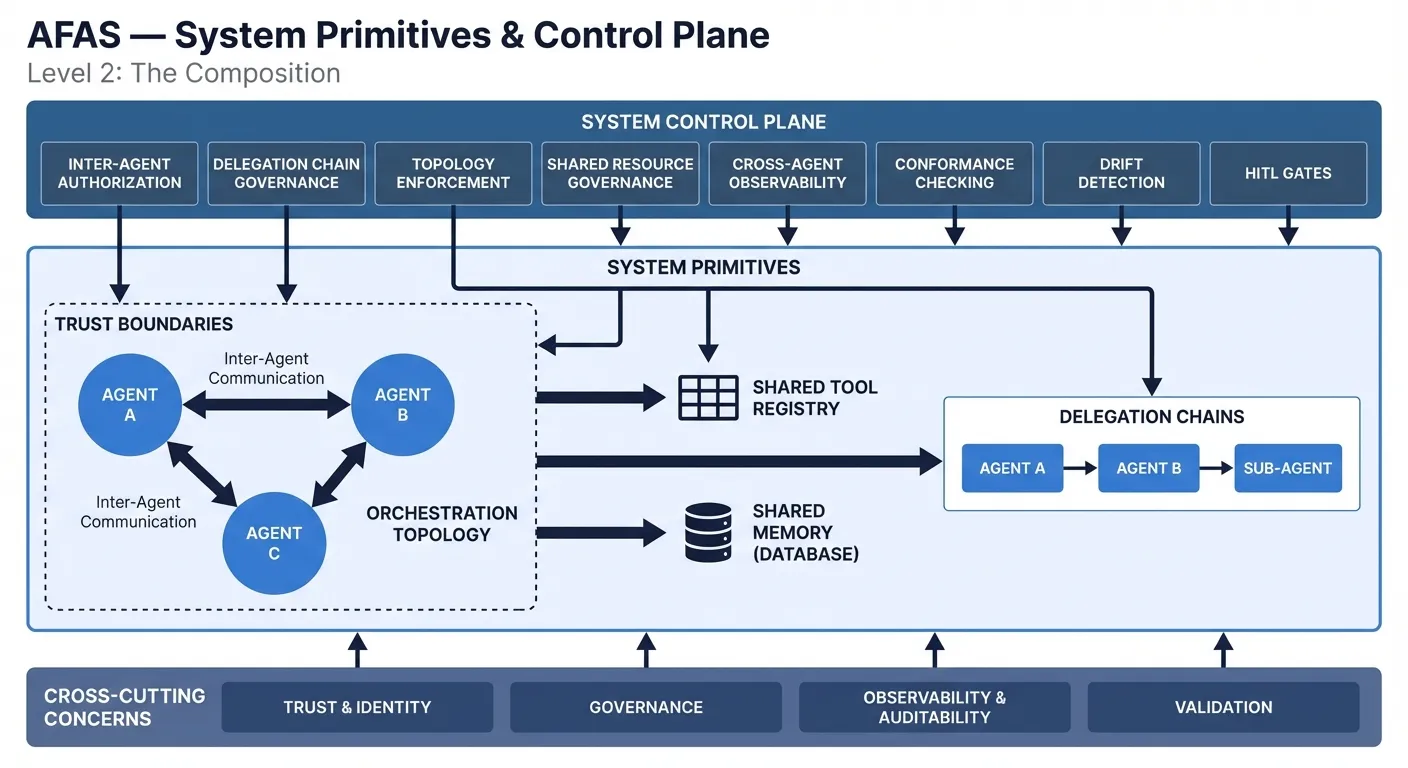

Level 2: The System

When you compose agents into a system, new primitives emerge that don’t exist at the agent level. A multi-agent system is not just a collection of agents. It has its own architecture. You can govern every individual agent perfectly and still have an ungoverned system if you haven’t addressed the system-level primitives.

System Primitives

These are the structural components that exist at the system level, the raw architectural nouns.

Agents. The instantiated population, the set of agents operating in the system, each carrying its own Level 1 primitives.

Shared memory. Cross-agent memory resources: shared semantic stores, shared episodic logs, shared knowledge bases. Different from per-agent memory. Who reads what, who writes what, and what the conflict and access policies are. These are system-level governance concerns.

Shared tool registry. The tool manifest for the system, with capabilities available across agents. Enables capability discovery. Governance defines what’s approved for which agents in which contexts.

Inter-agent communication channels. The protocols and channels agents use to interact: MCP (Model Context Protocol), A2A agent protocols, direct invocation, event bus, message queue. How agents talk, not what they say. Protocol choice has architectural consequences for observability, replay, and auditability.

System Structural Concerns

These aren’t primitives in the same sense. They’re structural properties of the composition that require explicit design. Skipping them doesn’t mean they don’t exist; it means they exist without governance.

Orchestration topology. How agents are arranged and relate: hierarchical, peer-to-peer, pipeline, event-driven, hub-and-spoke. This defines who routes to whom. It’s a design decision with significant governance implications: changing the topology changes the delegation structure.

Trust boundaries. Authorization scopes between agents, defining what can call what and under what conditions. These are structural decisions made at design time and enforced at runtime. Trust boundaries that exist only in documentation are not trust boundaries.

Delegation chains. How authority passes from orchestrator to sub-agent to sub-sub-agent. The authorization inheritance path. Delegation chains are recursive by nature, and governance must address not just depth but attenuation: authority should narrow at each hop, not pass through unchanged.

Consider the enforcement requirement concretely: a top-level orchestrator authorized to process payments up to $10,000 delegates a sub-task to a specialist agent. That agent should receive narrowed authority, scoped to a specific transaction type and capped at a lower amount. If that agent delegates further, the scope narrows again. Without attenuation policy, a tier-3 agent in a long chain ends up with authority that was never explicitly granted, just never explicitly revoked. That’s an authority leak, and it’s a system-level structural failure, not an agent-level bug.

Telemetry and observability. Distributed tracing, audit logging, and state telemetry that spans the system. Individual agent logs are insufficient. You need correlation that survives delegation chains and topology changes. No individual agent can produce this view. It’s an emergent property of the composition, and it must be designed as a structural component. If it’s not in the architecture, it doesn’t exist.

The Structural Pairing

The system primitives are not just scaled-up agent primitives, and governing individual agents isn’t enough. Delegation chains don’t exist inside any individual agent. They’re a property of the composition. An agent that escalates a ticket to a specialist, which routes to a third-tier resolution agent, the authorization question at each hop (was this delegation permitted? under what constraints? with what scope?) is a system-level question no individual agent can answer. You can instrument every agent and enforce every tool policy at the agent level and still have no view into whether the delegation chain as a whole stayed within authorized scope.

That’s the point of the primitives model: primitives are the nouns. The control plane holds the verbs. What exists versus what’s permitted.

Every primitive has a corresponding governance concern. Not a nice-to-have, but a structural pairing. The primitive tells you what exists. The control plane tells you what’s permitted.

Claimed identity pairs with identity verification. Tool capability pairs with tool authorization. Agent state pairs with state monitoring. Delegation chains pair with delegation chain governance. Every primitive that isn’t paired with a corresponding control represents a governance gap.

Structural safety in practice means that for every primitive that exists, there is a governance mechanism that must be designed. You can either design it deliberately or leave it as a gap. Behavioral safety (prompt instructions, model behavior, fine-tuning) covers gaps opportunistically. Structural safety covers them by design.

Once you have the vocabulary, you can conduct a real architectural review. Look at a proposed agentic system and ask: what are the agent primitives? What are the system primitives? For each primitive, where is the corresponding control plane element? If you can’t answer that question for a primitive, you’ve found a governance gap.

That’s a design review. Right now, most teams don’t have the vocabulary to conduct one.

What’s Next

The primitives model gives you the vocabulary. The next question is what holds the system together in operation.

When an orchestrator decomposes a goal and assigns work down a delegation chain, each agent receives instructions, not the intent behind them. That translation is lossy by default. The sub-agent executes correctly against its local task. The original goal gets dropped somewhere in the chain.

That gap is structural. It compounds at every delegation boundary. And it’s exactly what the next post addresses: the Intent Thread, the design pattern that keeps multi-agent systems connected to their original goals across the delegation chains you just mapped.

AFAS (Architecture Framework for Agentic Systems). Second post in an ongoing series. The previous post: Building Agentic Systems Without a Blueprint.