The Intent Thread: How to Keep Agentic Systems Connected to Their Goals

Here’s a failure mode you’ve probably seen. An orchestrator receives a request: Summarize the security incident for executive leadership, emphasizing business impact. It breaks the work down and hands a sub-agent a task: Summarize this incident report. The sub-agent does exactly that. The output is accurate, well-structured, and completely missing the business impact framing. Task completed. Intent failed.

Nobody made an error. The orchestrator delegated correctly. The sub-agent executed correctly. The system worked as designed, and still produced the wrong result.

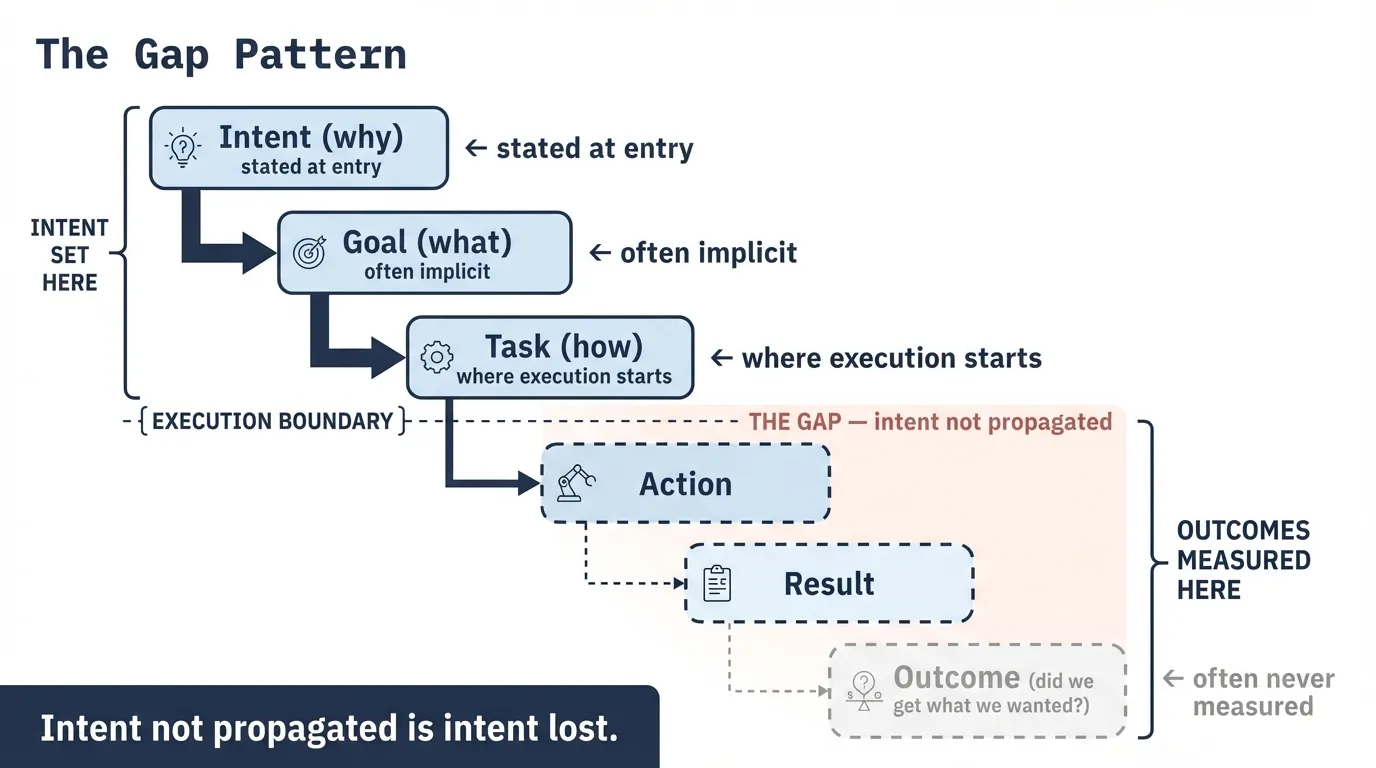

Why Intent Atrophies

Every delegation boundary is an opportunity for the original goal to get diluted, reinterpreted, or dropped entirely. When an orchestrator breaks a goal into tasks and assigns them to sub-agents, it translates intent into instructions. That translation is lossy by default. The sub-agent receives the instruction, not the intent behind it. Its context window doesn’t carry the original goal unless someone explicitly puts it there.

In most multi-agent architectures, nobody does. The orchestrator holds the goal. Sub-agents hold tasks. The connection between them is implicit, at best encoded loosely in a system prompt, at worst assumed.

This is intent atrophy: the progressive loss of goal alignment as work moves through delegation layers. You can write the clearest possible instructions and still lose the thread the moment those instructions get decomposed further, routed to a different agent, or evaluated against the wrong rubric.

If a downstream agent can succeed on its local rubric while violating the upstream success criteria, you don’t have a prompting problem. You have intent atrophy.

Intent atrophy is a structural problem. It requires a structural solution.

The Intent Thread Pattern

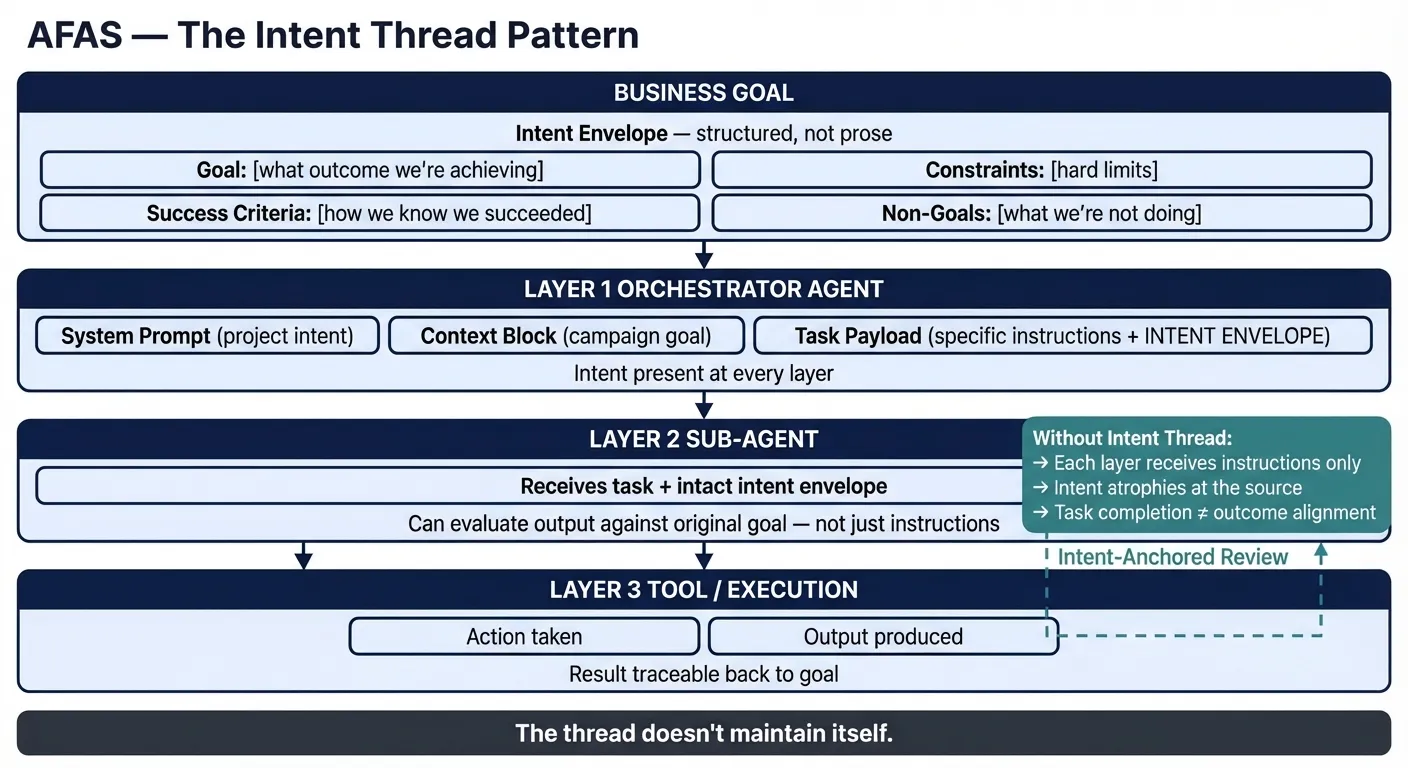

The Intent Thread is a design pattern for propagating goal context across delegation boundaries. It has four components.

1. Intent Envelope

Every task payload carries a structured intent block, not just instructions. The envelope contains four fields:

- Goal: What outcome are we actually trying to achieve?

- Constraints: What are the hard limits on how we get there?

- Success criteria: How do we know we succeeded?

- Non-goals: What are we explicitly not trying to do?

The envelope travels with the task as a structured block in the task payload, a JSON object appended to the task assignment, carried through the delegation chain unchanged. The instructions change at each hop. The envelope doesn’t.

{

"goal": "Summarize this security incident for executive leadership, emphasizing business impact",

"constraints": ["3 pages or fewer", "avoid technical jargon", "do not speculate on root cause"],

"success_criteria": "A CISO with no technical background can explain the business risk after reading this",

"non_goals": ["technical root cause analysis", "attribution", "remediation recommendations"]

}When the orchestrator decomposes work and assigns sub-tasks, each sub-task inherits this envelope from the parent. A sub-agent tasked with “extract financial impact data” receives narrowed instructions, but the same envelope. It knows what it’s ultimately serving.

Not decorative metadata. It’s the connective tissue that lets any agent in the chain evaluate its output against the original goal rather than just the immediate instruction.

2. Layered Context Injection

Context that supports intent has a natural hierarchy, and each layer belongs in a distinct position in the agent’s context window:

- Invariant policy frame. Always present, establishes operating constraints and role. Changes only at deployment. Your system prompt equivalent, whatever the platform calls it.

- Goal context block. Session-scoped, provides the “why” for this body of work. Carries the intent envelope and success criteria for the current operation.

- Task instruction. Immediate, actionable. The specific instruction for this invocation.

Most implementations collapse this into a single system prompt or a single task description. High-level intent then either crowds out task-specific instructions or gets buried beneath them. Separating the layers lets each one do its job without competing, and lets you reason about token budget by layer, injecting only the goal context relevant to the current delegation depth rather than everything at every level.

3. Intent-Anchored Review

QA steps that evaluate output quality without evaluating goal alignment are checking the wrong thing. An intent-anchored review step receives two inputs: the output being evaluated, and the original intent envelope that spawned the work. The evaluation rubric derives from the stated success criteria, not from generic quality heuristics.

This requires that intent be correctly scoped at delegation boundaries. A macro-intent like “reduce Q3 support escalations by 40%” cannot be evaluated directly by a sub-agent processing a single ticket. The orchestrator’s job is to translate: “reduce escalations by 40%” becomes “resolve this ticket without transferring to tier 2” as the localized success criterion the sub-agent can actually evaluate. Both levels of intent travel in the envelope, the macro goal and the micro success criterion. The sub-agent evaluates against the micro criterion; the orchestrator aggregates results against the macro goal.

Quality and alignment are orthogonal. A well-written, technically accurate document can completely miss the mark on intent. If your review rubric can’t detect that, you’re measuring results, not outcomes.

4. Traceability

Every artifact (document, decision, action) carries a reference to the intent that produced it. The audit layer. It lets you trace any result back to the goal that caused it and answer the fundamental question in post-incident reviews: did this output serve the original intent, or did it serve something else?

Traceability also surfaces atrophy in long-running workflows. When intermediate artifacts start referencing goals that have drifted from the original envelope, you can see it.

Three Systems Running This Pattern

Intent Thread isn’t abstract. Here’s what it looks like operating across three different agentic system types: a development framework, a work routing system, and a content pipeline. Different domains, same structural pattern.

Agentic Development Framework. A project begins with an intent document: what is being built, for whom, why, and what success looks like. That intent flows through stage gates (Discover, Design, Develop, Deliver). Each stage produces artifacts that carry the original intent forward. Stage gates don’t just check “is this phase complete?” They check conformance against intent. You can only run that conformance check if intent was present in structured form at every stage.

Agentic Work Routing System. A business goal enters as a high-level objective: reduce Q3 support escalations by 40%. The system decomposes it into epics, tasks, and subtasks, dispatching work to agents across a delegation tree. Individual agents execute specific tasks. Each task carries the original objective alongside its localized success criteria. When work is reviewed, the question isn’t just “was the task completed?” but “did this task connect back to the goal?” The intent envelope makes that evaluation possible at both levels.

Agentic Content Pipeline. An initiative intent document defines the goal: audience, outcome, what the content library should accomplish. That intent flows into a scoping brief, into a draft, into QA review. The QA step doesn’t evaluate against general quality heuristics. It evaluates against the original initiative intent criteria. In practice, that means a draft can be well-written and still get rejected: an early version of this post was returned because it optimized for broad accessibility over practitioner precision. Technically fine, wrong goal. The draft passes when it serves the stated intent, not when it reads well. Every artifact is traceable back to the intent that created it.

Why This Is a First-Class Architectural Concern

Structurally, Intent Thread connects three elements of the AFAS architecture: the Instructions/context primitive (what an agent knows at invocation), Delegation chains (the propagation path intent must travel), and Conformance checking in the control plane (runtime verification that execution stayed connected to intent). You cannot run the last step without the first two. A conformance check with nothing to check against is just a quality review.

Intent Thread is a first-class concern in AFAS, not a soft operational guideline, but the design pattern that makes runtime governance possible. Task completion is not outcome alignment. An agent can complete every assigned task, pass every review step, and still fail the original intent. If your evaluation framework only checks whether steps finished, you’re auditing a process. Intent Thread is the design pattern that makes it possible to govern a system.

If you’re building or governing a multi-agent system and you’ve hit the “we asked for X and got Y” problem more than once, this is the structural fix. Add the envelope. Layer the context. Anchor review to intent. Trace everything back.

The thread doesn’t maintain itself.

What’s Next

The control plane post addresses governance directly: how policies are defined, where they’re enforced, and why those are different architectural problems. Intent Thread sets up that conversation cleanly. Conformance checking is the control that closes the loop, and now you understand what it’s actually checking against.

AFAS (Architecture Framework for Agentic Systems). Third post in an ongoing series. Previous posts: The Architecture Gap · What an Agent Actually Is.