Governance at Velocity and Scale

Design-time governance and runtime governance are different problems. Design-time governance produces architectural decisions, policy definitions, and access controls, the artifacts that describe what the system should do. Runtime governance enforces those decisions at the velocity and scale the system actually operates.

Agentic systems make this harder than anything that came before. Non-deterministic reasoning, dynamic context injection, recursive delegation, multi-model pipelines. Runtime behavior isn’t fully specifiable at design time. You can’t certify what you can’t fully predict. You can’t audit quarterly what’s happening in milliseconds.

This is the governance timescale problem. The previous posts in this series built the vocabulary (primitives) and the pattern (Intent Thread). This post covers enforcement: how governance actually operates at runtime.

What Runtime Governance Actually Is

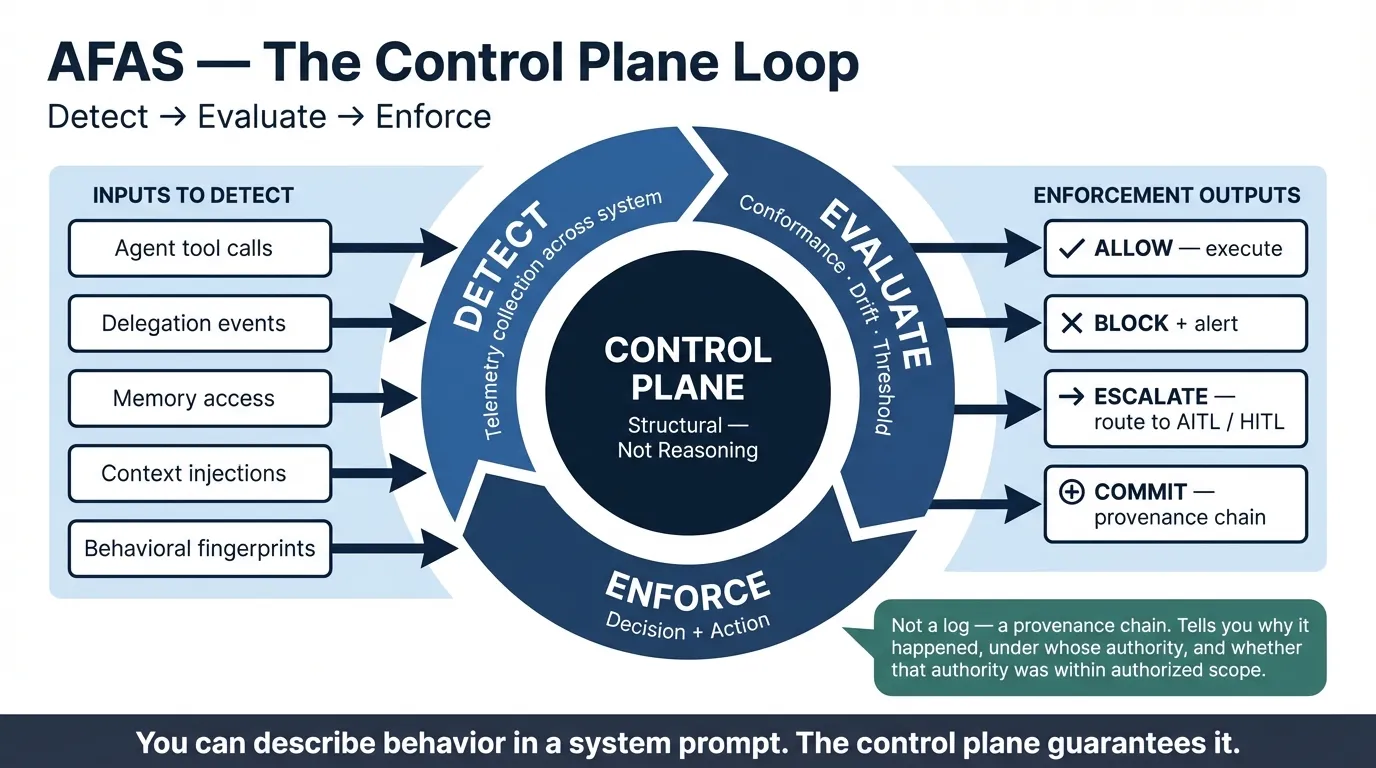

Runtime governance isn’t logging. Logging records what happened. Runtime governance evaluates what’s happening and responds.

Three mechanisms make up runtime governance in AFAS.

Conformance checking. At execution time, the control plane evaluates whether the agent’s action conforms to its authorized policy. Not “did the agent succeed?” (that’s output evaluation). Conformance checking asks: did the execution stay within the parameters the architecture authorized? Did this agent call this tool under appropriate delegation authority? Is this context injection within the data access policy for this agent in this context? Did the output satisfy the stated constraints?

The Intent Thread pattern from the previous post connects to enforcement here. The intent envelope defines success criteria. Conformance checking evaluates whether execution met them at the structural layer, not the output layer. Without the envelope, conformance checking has nothing to check against. With it, you can verify alignment at every delegation hop, not just at final output. Structural conformance and semantic output validation are different architectural concerns: the control plane answers whether authorized parameters were respected; a separate output validation layer answers whether the result was good. Conflating them assigns reasoning responsibilities to infrastructure that shouldn’t have them.

Drift detection. Individual conformance checks evaluate single actions. Drift detection evaluates patterns over time. Behavioral drift in an agentic system typically isn’t a sudden failure. It’s a gradual shift in which tools agents prefer, which reasoning paths they take, which delegation patterns they use. Some drift is noise. Some indicates that agent behavior has moved outside the policy envelope without any single action triggering a conformance violation.

Drift detection requires a baseline, the behavioral fingerprint of an agent operating within authorized parameters. It requires telemetry that captures not just what agents did, but how they decided: which tools were invoked in which order, what delegation paths were taken, how context was used. And it requires threshold policies that define when pattern deviation warrants intervention, not just observation. Baseline management is its own operational concern: when agent instructions or available tools legitimately change, the behavioral baseline must evolve with them. A stale baseline produces false positives, and false positives erode trust in the governance layer itself.

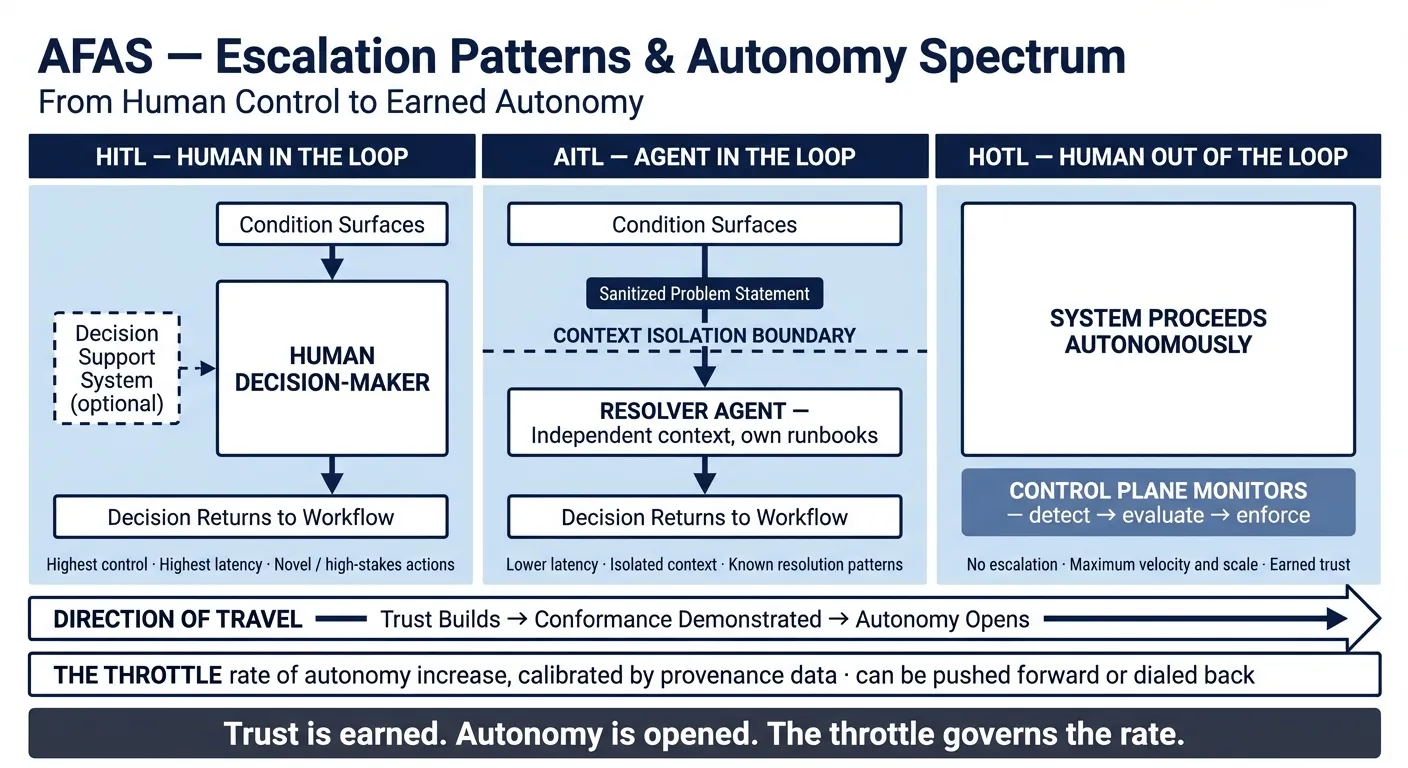

Escalation patterns. Not all runtime governance can be automated. Some conditions can’t be resolved by the agent that surfaces them. The stakes are too high, the context is too ambiguous, or the situation is genuinely novel. When an agent can’t proceed, where that escalation goes and under what conditions is itself a governance decision.

Escalation Patterns

Not all unresolved conditions belong in the same destination. When an agent encounters something it can’t resolve (a conflicting constraint, a novel context, an authorization ambiguity), where that escalation routes is itself a governance decision. Three patterns apply, and they sit on a spectrum.

Agent-in-the-Loop (AITL). The first stop isn’t always a human. Another agentic system, operating independently with its own runbooks, playbooks, and broader operational visibility, may be the right resolver. That system receives only the surface of the problem: what the agent encountered and what it needs resolved. It doesn’t inherit the full context of the originating workflow. That boundary is deliberate. Keeping the resolution system isolated from the originating system’s operational context is a design principle. The resolver makes a cleaner decision precisely because it isn’t carrying the originating agent’s full load. What crosses the boundary is a structured escalation payload: the intent envelope summary, the specific constraint or ambiguity encountered, the action the agent wanted to take, and the options it sees. The operational context (the originating agent’s working memory, conversation state, full tool history) stays behind. The problem crosses the boundary. The context doesn’t.

Human-in-the-Loop (HITL). Some conditions require a human. When a condition reaches a human, that human may decide immediately. They know the situation, they’re confident in the call, no consultation needed. Or they may reach for support before deciding: a risk model, a scenario analysis tool, an intelligence co-pilot. Either is valid. When a human does consult a decision support system, that system is separate from the originating workflow, helping the human evaluate the decision without inheriting the agent’s operational context. The human bridges the two and carries the decision back. Decision support systems purpose-built for high-stakes, uncertain decisions (agentic workflows in their own right) are an emerging pattern worth its own exploration.

HOTL (Human Out of the Loop). The system proceeds autonomously. No escalation triggers. The control plane monitors silently, conformance checking and drift detection running continuously, but no external decision is required. The design destination for mature agentic systems operating under earned trust, not a failure of governance but its proof.

The direction of travel, and back. HITL, AITL, HOTL is a spectrum, not a one-way progression. The design goal is movement toward HOTL as trust accumulates. But trust is revocable. A compliance event, a model update, a regulatory change, or a pattern of drift: any of these warrants dialing back. The throttle goes both directions. The trust ladder describes the criteria for moving between levels, what evidence must accumulate before autonomy increases. The throttle is the architectural mechanism that translates that earned trust into actual autonomy, and dials it back when conditions change. One is the policy. The other is the control.

Agentic systems must be designed with this dial as a first-class architectural control, not added after deployment when trust needs calibrating.

Output validation is a separate governance mechanism, not an escalation pattern in the same sense. It’s a review gate: a human reviews produced artifacts before downstream use or publication. It catches intent alignment failures without interrupting execution. The intent-anchored review step from the Intent Thread pattern is this mechanism applied structurally. It belongs in the conformance layer, not the escalation taxonomy.

The control plane routes escalations based on action type, risk level, and reversibility. Getting that routing policy right is as much an architectural decision as any other.

The Control Plane as Enforcement Layer

The control plane is where runtime governance lives architecturally. It’s the layer that intercepts execution, evaluates conformance, routes escalations, manages drift signals, and writes the audit trail. It’s distinct from the agents themselves. It has no reasoning engine, no context window, no delegation authority. It’s static infrastructure that governs dynamic behavior.

The control plane’s operating loop is: detect → evaluate → enforce. The sensing layer collects telemetry across the system: what actions agents took, which tools were invoked, which delegation paths were exercised. The evaluation layer correlates that telemetry against policy and behavioral baselines (conformance checking, drift detection, threshold monitoring). The enforcement layer acts: allow, block, escalate, alert, or commit to the provenance chain. This pattern of collecting signals, correlating against baselines, and triggering calibrated responses is the same logic that Security Information and Event Management (SIEM) systems have run in cybersecurity for decades, and that User and Entity Behavior Analytics (UEBA) applies to anomaly detection. The primitives are different; the architectural logic is the same. That parallel deserves deeper treatment than this post can give it.

An agent that governs itself is behavioral safety. A control plane that governs the system is structural safety. An agent’s instructions can drift, its context can be manipulated, its reasoning can produce unexpected outputs. The control plane’s enforcement logic is explicit, auditable, and independent of agent behavior.

You can write the most comprehensive policy in the world in an agent’s system prompt. If there’s no control plane to enforce it, you’ve described behavior. You haven’t guaranteed it. A reasonable question follows: who governs the governance layer? The control plane isn’t immune to failure, but its failure modes are deterministic and auditable in ways agent reasoning isn’t. A misconfigured policy is a bug you can find and fix. An agent that reasons its way around a constraint is harder to detect, harder to reproduce, and harder to explain to a regulator.

The control plane is also where the AFAS primitives model connects to operations. Every structural concern from the system primitives (trust boundaries, delegation chains, observability) is enforced here. Trust boundaries are checked at call time, not assumed from topology. Delegation chains are validated against attenuation policy at every hop. Observability isn’t instrumented individually per agent. It’s a system-level telemetry layer with correlation identifiers that survive delegation boundaries.

The Audit Layer

Governance at velocity produces evidence at velocity. The audit layer is the record of every consequential action taken by every agent in the system: what was called, by whom, under what delegation authority, with what inputs, producing what outputs.

Not a log, but a provenance chain. The distinction matters for compliance and post-incident review. A log tells you what happened. A provenance chain tells you why it happened, under whose authority, and whether that authority was within the authorized scope.

In a regulated enterprise environment, the audit layer is the answer when compliance asks: “show us the decision trail for this action.” Without it, the answer is “here’s a log file and here’s the LangChain config,” the situation T1 described. With it, you can trace any output back through the delegation chain to the human authority that authorized the scope.

What’s Next

Runtime governance answers the enforcement question: policies can be evaluated at velocity, drift can be detected, escalations can be routed to the right destination, whether agent, human, or nowhere if trust has been earned.

The remaining question is: whose authority does the control plane enforce? When an agent invokes a tool, delegates a task, or accesses shared memory, what identity is it acting under? How is that identity verified? What does verified identity mean in a multi-agent system where no human is in the authentication loop for most actions?

That’s the trust and identity problem. It closes the loop.

AFAS (Architecture Framework for Agentic Systems). Fourth post in an ongoing series. Previous posts: The Architecture Gap · What an Agent Actually Is · The Intent Thread.