Who Is This Agent? Trust and Identity in Multi-Agent Systems

When a human submits a request to a traditional enterprise system, identity is well-understood. The user authenticates. The session carries credentials. Authorization checks verify what that session can do. The mental model is simple because identity maps to a single human principal with persistent, auditable context.

In a multi-agent system, that model breaks at the first delegation hop.

An orchestrator receives a user request. It spawns three sub-agents. One of those spawns two more. Each accesses tools, reads shared memory, writes to external systems, all operating under some interpretation of “the user’s original request.” But which agent is acting? Under whose authority? Verified by whom? And if something goes wrong, who is responsible?

Most enterprise IAM stacks weren’t designed to model dynamic, multi-hop delegation with explicit attenuation and end-to-end provenance at runtime. The gap isn’t that identity infrastructure doesn’t exist. It’s that the semantics of delegated agentic authority weren’t part of the design target.

The Four Identity Problems

Multi-agent systems surface four distinct identity problems simultaneously.

Claimed vs. verified identity. Every agent has an identity: name, role, declared purpose, claimed capabilities. The identity primitive from the AFAS model: what the agent asserts about itself. The critical word is “claims.” An agent can claim to be an authorized orchestrator, an approved specialist, or a trusted system component. Without verification infrastructure, claimed identity is the only identity in the system. In practice, verified identity extends beyond authenticating a name to attesting an execution context, including what model, binary, or policy bundle is actually running. A name without a verified execution context is still a claim.

Verification is a control plane concern, external to any individual agent, enforced at the structural layer. Every agent that receives a task payload, every tool that processes a request from an agent, needs to verify the identity of the calling entity. Assumed identity based on position in a delegation chain is not verification. It’s trust without foundation. Zero trust (never trust, always verify) is the architectural principle this problem demands. The mechanisms appear in the section below; this is the reason they’re required.

Cross-agent authorization. Verified identity solves “who is this agent?” It doesn’t solve “what is this agent permitted to do?” Authorization in a multi-agent system operates across multiple resource types simultaneously:

- Which tools can this agent invoke, under what conditions, with what scope?

- Which memory stores can this agent read or write?

- Which agents can this agent delegate to, and under what constraints?

- What is this agent explicitly prohibited from doing?

The capability versus authorization distinction from the primitives model, what an agent can do versus what it’s permitted to do, operating at system scale. Shared tool registries, shared memory stores, and inter-agent communication channels are all access-controlled resources. The access policies for each must be defined, stored, and enforced at the control plane, not assumed from topology or inferred from the agent’s stated role. Explicit prohibition design (the deny side of authorization) is as architecturally important as permission grants. In complex systems you cannot enumerate all permissions; you must also define what agents are never permitted to do, regardless of context or claimed authority. Architecturally, this requires a strict decoupling: the control plane acts as the Policy Decision Point (PDP) evaluating access, while the API gateways, memory stores, and tool registries act as Policy Enforcement Points (PEPs) blocking unauthorized execution.

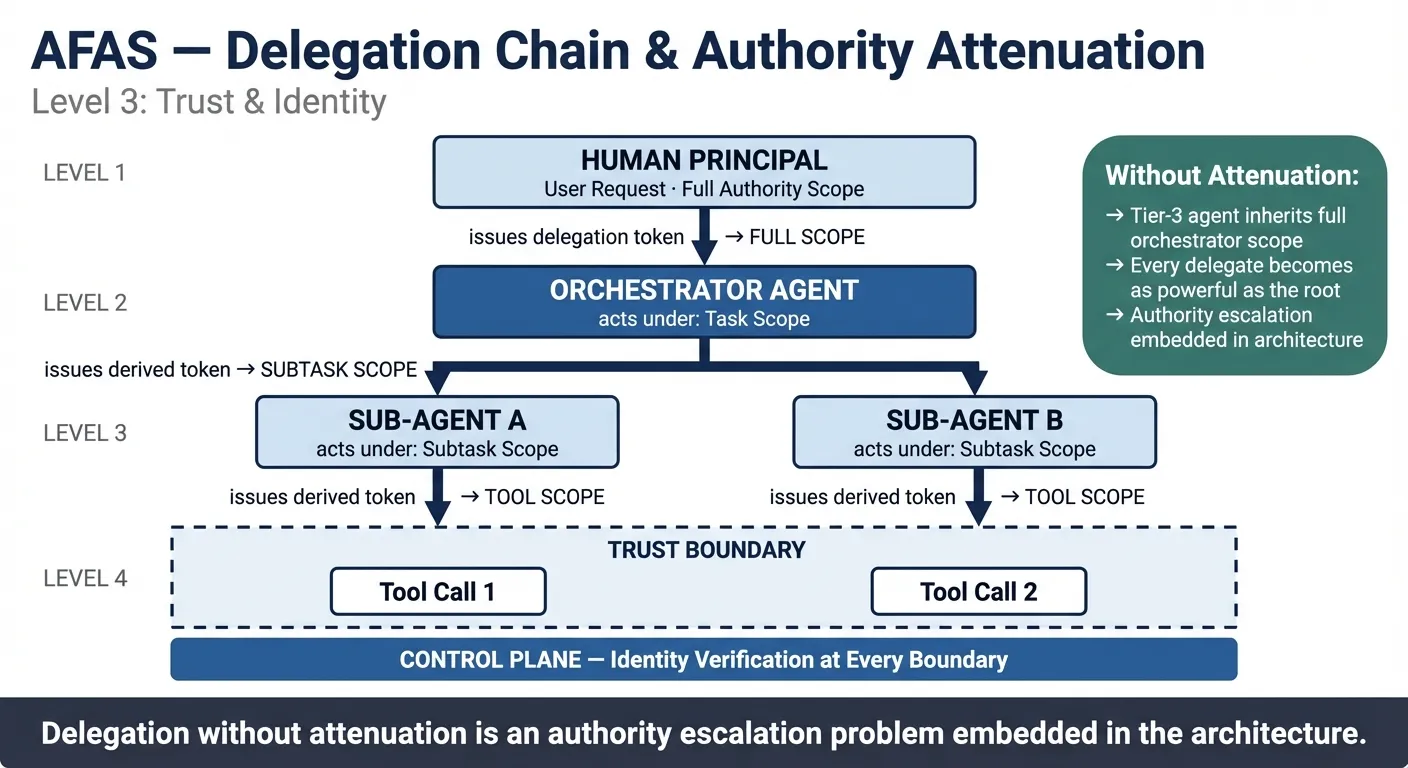

Delegation chain identity. The hardest problem. When an orchestrator delegates a task to a sub-agent, that agent acts “on behalf of” the orchestrator. But what does that mean for authorization? Does the sub-agent inherit the orchestrator’s full authority? If it delegates further, does its delegate inherit the same scope?

Many early agentic implementations treat delegation as authority propagation: the delegate receives what the delegator had. That’s an authority escalation problem embedded in the architecture. The correct model is authority attenuation: each delegation hop receives the scope required for its specific task, not the full scope of its parent. The delegation token carries the scope constraint. Authority narrows at each hop, deliberately.

An unbounded delegation chain, where tier-3 agents operate with the full authority of the top-level orchestrator because no attenuation policy was defined, isn’t an access control failure in the usual sense. It’s an architectural gap: the system has delegation without constraint design. A document summarization agent delegated by an orchestrator with database write access shouldn’t inherit that write access, but in a propagation model, it does. Every delegate becomes as powerful as the root.

Provenance records must capture both the actor (the agent that executed the action) and the on-behalf-of principal (the entity whose authority the actor is exercising). Collapsing these into a single identity field makes post-incident analysis significantly harder.

Accountability. Identity and authorization tell you who did what and whether they were permitted to do it. They don’t tell you who is responsible when something goes wrong. When a delegation chain of five agents produces an output that causes harm, existing accountability frameworks have no direct answer. They were built for human decision-makers, not autonomous execution chains. The architecture’s answer is provenance: a tamper-evident record of every consequential action, the delegation token that authorized it, and the human governance chain ultimately responsible for the system’s scope. The fourth problem and the hardest to close. The expanded treatment follows.

Zero-Trust Applied to Agents

The zero-trust principle (never trust, always verify) was developed for network architecture but applies directly to multi-agent identity. The operating assumption: no agent should be trusted based on position in the topology, claimed identity, or prior session state. Every boundary, every action, requires verification.

The implementation primitives already exist: SPIFFE/SPIRE for workload identity, mTLS for mutual authentication, OAuth 2.0 token exchange for scoped delegation. What’s missing isn’t the mechanism. It’s the semantic model for agentic delegation that these mechanisms need to enforce.

In practice, this requires three architectural elements.

Scoped, short-lived tokens. Each delegation hop issues a token scoped to the specific task and constrained by attenuation policy. Tokens are lease-based and short-lived, renewable under policy for long-running tasks, and revocable by the issuing authority. Durable credentials that outlive their task scope are an architectural smell. An agent cannot use an orchestrator’s token directly. It uses a derived, narrower token issued at delegation time. The token carries the scope and the provenance: who issued it, for what purpose, with what constraints. This does not require inventing new cryptography; existing workload identity standards like SPIFFE/SPIRE and OAuth 2.0 token exchange can be extended to carry agent-specific attenuation payloads.

Revocation must propagate: when a parent agent’s delegation token is invalidated, derived tokens downstream must also be invalidated. The mechanism (push-based revocation, short lease windows that force re-verification, or a revocation list checked at enforcement points) is an implementation choice. The requirement is architectural.

Boundary verification at every hop. Tool calls, memory access, and inter-agent messages are verified at the point of call, not assumed from context. A middleware or gateway intercepts and verifies before the resource is reached. This connects to the control plane enforcement layer from the previous post: the same detect → evaluate → enforce loop that handles conformance also handles identity verification. These aren’t separate systems.

The context isolation principle introduced in the escalation patterns discussion (limiting what a resolver receives to the specific problem, not the originating workflow’s full memory) applies here as well. When an agent routes a condition to a resolution system, that resolver verifies the identity of the requesting agent against what it needs to know, not the full operational context of the originating workflow. Identity verification crosses the boundary. Operational context doesn’t.

Mutual authentication. An agent receiving an unexpected delegation, a request that bypassed the defined orchestration path, should not proceed on the assumption that it’s legitimate. When two agents communicate, both verify the other: not just “is this caller authorized to call me?” but “am I authorized to respond to this caller?” The zero-trust model treats every unexpected call as potentially suspect until verified.

A walk-through. A user submits a request to process a compliance exception. The orchestrator authenticates the user, then issues a delegation token to a compliance-review-agent, scoped to read:exceptions:case-47, lease duration 10 minutes, audience policy-service. That agent delegates a sub-task to a policy-lookup-agent, issuing a derived token narrowed further to read:policy-library:section-4. It cannot read the original exception record, only the referenced policy. The policy-lookup agent calls a tool through an API gateway. The gateway verifies the calling workload identity via SPIFFE/SPIRE, checks the token scope at the PDP, and executes only if both checks pass. Every action writes a tamper-evident provenance record: which agent acted, under what token, scoped to what authority, linked to the delegation that authorized it and ultimately to the human governance chain that established the policy scope. When compliance audits the exception six months later, the record doesn’t just show what happened. It shows the full delegation chain, each scoped token, and the accountability trail back to the humans who authorized the system to operate.

The Accountability Gap

Identity and authorization tell you who did what and whether they were permitted to do it. They don’t tell you who is responsible when something goes wrong.

Traditional accountability is clear: humans make decisions, humans bear responsibility. Legal, organizational, and professional accountability frameworks all assume a human decision-maker who can be identified, examined, and held to account.

Multi-agent systems complicate this in ways the existing frameworks weren’t built to handle. When a delegation chain of five agents produces an output that causes harm, which agent made the “decision”? The orchestrator that designed the decomposition? The sub-agent that executed the problematic action? The human who deployed the system? The organization that approved the use case?

The architecture answers accountability with provenance records and explicit authority assignment. Every consequential action in a governed agentic system carries a provenance chain: which agent acted, under what delegation token, with what scope, under what human-assigned authorization. The provenance chain is append-only and tamper-evident. Each action’s record includes the delegation record that authorized it, which links back to the next level up, ultimately to the human authority who established the authorization scope.

When something goes wrong, the audit trail doesn’t just show what happened. It shows which governance chain (policy owners, authorization scopes, accountable roles) authorized the delegation that made the action possible. That’s how accountability survives automation: not by identifying the agent as responsible, but by tracing the action back to the human authority structure that designed and authorized the system. Provenance provides the evidence chain. What the legal and regulatory frameworks do with that evidence is a separate question the architecture can inform but not resolve. Current regulatory frameworks assign accountability to deploying organizations, not to individual software components. The provenance chain gives that organization the evidence to fulfill its accountability obligations, and to demonstrate that governance was designed in, not bolted on.

The accountability layer of the control plane: not just logging, but provenance. Not just “what happened” but “who authorized this chain, and when.”

Trust Architecture as a First-Class Design Concern

Trust and identity aren’t security bolt-ons. They’re structural properties that must be designed before agents are built.

A common failure pattern: teams build the agentic system first, then add IAM “later” when compliance asks. By then, the delegation patterns are hardcoded, the token model is an afterthought, and the provenance chain doesn’t exist because the system was never designed to produce it. Retrofitting trust architecture onto a deployed multi-agent system is significantly harder than designing for it from the beginning.

The AFAS trust architecture integrates three elements: the agent identity primitive (claimed identity, with verification designed at the control plane), the delegation chain model (authority attenuation at every hop, not propagation), and the control plane enforcement layer (verification at boundaries, scoped tokens, provenance records). These work as a system. A control plane without an identity model is checking against nothing. A delegation chain without attenuation policy is an authority escalation risk. An audit trail without provenance records is a compliance artifact, not governance.

This closes the trust and identity layer. The identity model, the delegation chain, the control plane, and the provenance record are a system. They work together or they don’t work. Any one in isolation leaves a gap the others can’t compensate for.

The framework is usable as-is. Build on it, adapt it to your constraints, and expect it to evolve as the systems it governs do.

AFAS (Architecture Framework for Agentic Systems). Fifth post in an ongoing series. Previous posts: The Architecture Gap · What an Agent Actually Is · The Intent Thread · Governance at Velocity and Scale.